The Deflation Paradox Isn’t Priced In

AI Task Autonomy is Doubling Faster Than Moore's Law Ever did & What This Means For Money

Technology is advancing faster than at any point in human history. And almost nobody is asking the right question about what that means for money.

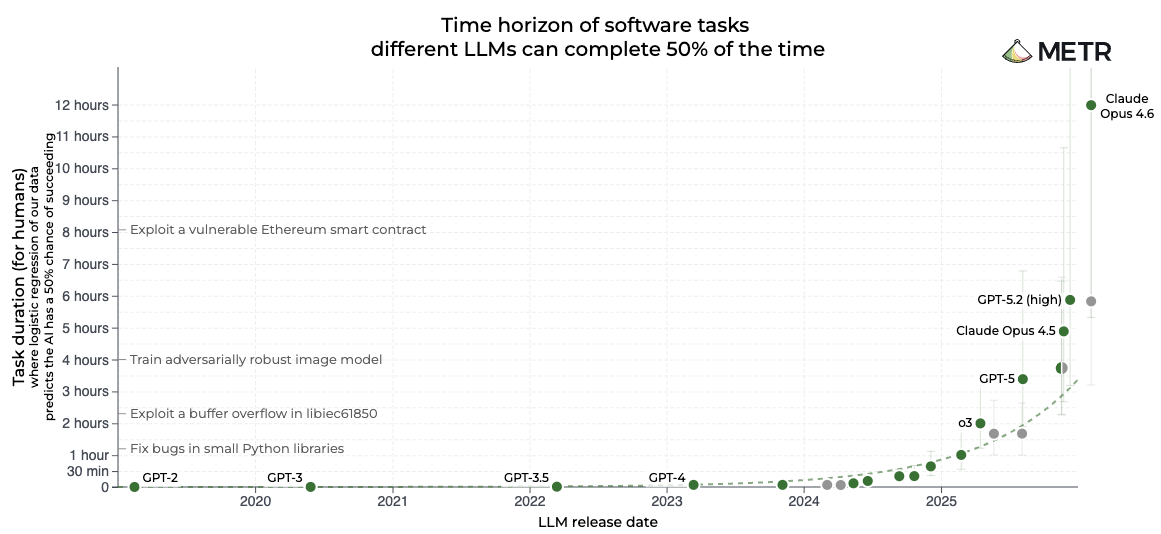

METR, an AI safety research organization, has been quietly publishing what may be the most important chart in technology. They measure the "time horizon" of frontier AI models: the length of task an AI agent can complete autonomously with 50% reliability. Their finding is that this duration has been doubling approximately every seven months over the past six years, and recently accelerated to every four months. For context, Moore's Law, the most celebrated exponential trend in technology, doubled transistor density every 18 to 24 months. AI capability is compounding three to five times faster.

The specific numbers tell the story. GPT-4.5, released in early 2025, could reliably complete tasks taking a human roughly 30 minutes. Claude 3.7 Sonnet reached approximately 50 minutes. By late 2025, Claude Opus 4.5 hit 4 hours and 49 minutes. If the current trajectory holds, AI agents will reliably handle full-workday task sequences by 2027 and week-long projects by 2028.

Moore's Law, Generalized

Gordon Moore's 1965 observation that transistor density doubles on a predictable cadence was never just about transistors. It was about the economic consequences of exponential efficiency gains: each doubling meant more compute per dollar, which expanded the addressable market for computation, which funded the next generation of chips. The semiconductor industry is now approaching $1 trillion in annual revenue in 2026, up 26% from 2025, driven by an AI infrastructure cycle that analysts are calling a "giga cycle." The efficiency gains created their own demand.

METR's time horizon data reveals the same dynamic at a higher level of abstraction. The underlying compute improvements of cheaper inference, more efficient architectures, and better training techniques translate directly into longer autonomous task completion. The cost of running an AI agent on a one-hour task today is a fraction of what it cost 18 months ago. Each generation does more with less, which is the essence of Moore's Law applied not to transistors but to cognitive work itself.

Network Effects Accelerate the Curve

Metcalfe's Law states that the value of a network is proportional to the square of its participants. AI infrastructure exhibits this dynamic with unusual intensity. As more companies integrate AI agents into their workflows, they generate shared tooling, standardized APIs, plugin ecosystems, and interoperable infrastructure that makes the next adopter's integration cheaper and more capable. Every company that builds an AI-compatible workflow increases the value of the ecosystem for every other company considering adoption.

The evidence suggests this network effect is already compressing the doubling time. METR's data shows the time horizon doubling rate accelerated from every seven months (2019 through 2025) to every four months in the most recent period. This acceleration is consistent with network effects layering on top of raw compute improvements: each new model benefits not only from better hardware but from a richer ecosystem of tools, integrations, and deployment infrastructure built by the expanding network of adopters.

AI venture capital flows reflect this dynamic. AI firms captured 61% of all global venture capital in 2025, with U.S. private AI funding alone reaching $109 billion. Capital follows network effects because investors recognize that ecosystem density compounds returns, although which companies will ultimately capture long term value remains up for debate.

Reflexivity: AI That Builds Itself

George Soros defined reflexivity as the feedback loop where participants' perceptions influence reality, which in turn shapes perceptions. The AI capability curve embodies this principle, but with a twist that no prior technology has exhibited: AI is now a primary tool in its own development.

Anthropic has disclosed that Claude Code, its AI coding agent, now writes 100% of its own codebase. The AI builds the AI that builds the AI. When the tool being improved is also the tool doing the improving, the feedback loop tightens from a human-paced iteration cycle to a machine-paced one. Each capability gain directly accelerates the next capability gain, because the more capable agent is immediately applied to the task of making itself more capable still.

This self-referential improvement cycle explains why METR's doubling time is compressing rather than plateauing. Traditional technology curves eventually decelerate as low-hanging engineering gains are exhausted and human development bottlenecks constrain iteration speed. AI development is systematically removing the human bottleneck from its own improvement loop. The engineers are still there, but they are increasingly directing AI agents that execute the work, review the code, identify the bugs, and propose the architectural changes. The cycle time between identifying an improvement and deploying it shrinks with each generation of model capability.

Enterprises adopt AI tools because competitors are adopting them, reducing the career risk of early adoption while increasing the career risk of delay. Developers build on AI platforms because that is where the users are, which attracts more users, which attracts more developers. Investors fund AI infrastructure because adoption metrics validate the thesis, and the capital itself accelerates the adoption that validates the next round of investment.

Every layer of the feedback loop is accelerating simultaneously: the models improve faster because AI builds AI, adoption grows faster because network effects lower barriers, and capital flows faster because reflexive adoption validates investment theses in compressed timeframes.

The Deflationary Reality That Nobody Prices

Technology is inherently deflationary. Every efficiency gain, every doubling of AI capability, every reduction in compute cost per unit of output represents more goods and services produced with fewer inputs. When an AI agent that builds its own successor can complete in minutes what previously required hours of skilled human labor, the real cost of that output has collapsed. When semiconductor manufacturing delivers 26% more capacity year over year at declining cost per transistor, the economy's productive capacity expands faster than its input costs.

Moore's Law has been generating deflationary pressure for six decades. But the pace has never been this extreme. AI capability compounding at three to five times the rate of Moore's Law, with self-improving feedback loops compressing iteration cycles further, means deflationary pressure is intensifying on a timeline measured in months. The METR data implies that the cost of cognitive work, which represents the majority of economic output in developed economies, is entering a period of exponential decline.

One would expect, this deflation would manifest as rising purchasing power. The same unit of money would buy more goods, more services, more output over time. The gains from technological progress would accrue naturally to everyone holding money.

This is not what happens under the current status quo. Central banks operate under an explicit mandate to maintain positive inflation targets, typically around 2% annually. When technology creates deflationary pressure, central banks expand the monetary base to counteract it. The result is a paradox: the fastest period of technological deflation in history is occurring simultaneously with aggressive monetary expansion. The efficiency gains exist. The productive capacity is expanding. But the value of those gains is being systematically diluted through monetary inflation before it reaches the people holding the currency.

If AI reduces the cost of producing a unit of economic output by 30% over three years, but the money supply expands by 40% over the same period, the net effect for anyone holding dollars currency is negative. The deflation that should have increased purchasing power was not merely offset but overwhelmed by inflation.

This is not hypothetical. It is the observed reality of the past several decades. Productivity has risen substantially since the 1970s. Real wages, measured in purchasing power, have stagnated or declined for large segments of the population. The gains from Moore's Law, from the internet, from mobile computing, from cloud infrastructure, were captured disproportionately by asset holders rather than currency holders, because assets reprice upward with monetary expansion while currency purchasing power erodes.

Fixed Money Captures Technological Deflation

Bitcoin's fixed supply creates a fundamentally different relationship between technological progress and monetary value. Under a fixed monetary standard, deflationary gains from technology cannot be inflated away because the money supply is fixed.

If the economy's productive capacity doubles while the money supply remains constant, each unit of money commands twice the purchasing power. The technological gains accrue directly to money holders through appreciation rather than being diluted through expansion. This is the mathematical consequence of fixed supply meeting expanding output.

The AI capability curve makes this dynamic more urgent than at any prior point. The economy is entering a period where the cost of cognitive work falls exponentially, with self-improving AI systems compressing the timeline further. Under fiat monetary conditions, central banks will expand money supply to prevent the deflation that this productivity surge would naturally create. Under a Bitcoin standard, that deflation would manifest as rising purchasing power for anyone holding the asset.

The reflexive element compounds the argument. As more participants recognize that Bitcoin captures technological deflation while fiat currency dilutes it, adoption increases. Increased adoption strengthens Bitcoin's network effects through Metcalfe's Law. Stronger network effects increase Bitcoin's monetary utility. Greater monetary utility attracts further adoption. The same self-reinforcing cycle that drives AI capability growth operates on Bitcoin's monetary network, with the added dimension that each new participant benefits from the purchasing power gains that flow from technological deflation into a fixed-supply monetary asset.

Both AI and Bitcoin are network-effect technologies where infrastructure providers capture disproportionate value as the network scales. Both exhibit reflexive adoption dynamics. Both benefit from Moore's Law cost declines. But Bitcoin adds a dimension that AI alone does not: it provides the monetary layer that preserves the value created by technological deflation.

The METR time horizon chart is not merely a measurement of AI progress. It is a measurement of deflationary pressure building in the global economy at an exponential rate. The question is not whether that pressure exists but whether individuals will allow the efficiency gains to be inflated away, or if they will hold bitcoin to capture the gains.

Early Riders has been writing about this trend for over a year. The acceleration just makes it more obvious.